How Claude Opus 4 Thinking Mode Powers Autonomous Coding Workflows in Small Teams

You're not a full-time developer. But somewhere along the way, you started doing developer things — building an internal tool here, automating a workflow there, managing a contractor you can't fully supervise. And you've tried using AI to help. It wrote code. Some of it even worked. But you kept finding yourself back in the loop, reprompting, fixing, re-explaining context that should have carried over from three messages ago.

That's not an AI problem. That's a prompting strategy problem. And Claude Opus 4's thinking mode is the specific fix most small teams don't know exists.

Claude Opus 4 thinking mode is an extended reasoning capability that lets the model work through a multi-step coding problem before returning output, catching contradictions, evaluating tradeoffs, and holding architectural context across decisions. Instead of responding to each prompt in isolation, the model reasons internally first. That changes what's possible on long-horizon tasks: you get fewer confident wrong answers and more code that actually fits the system it's going into.

What Is Claude Opus 4 Thinking Mode and Why Does It Matter for Code?

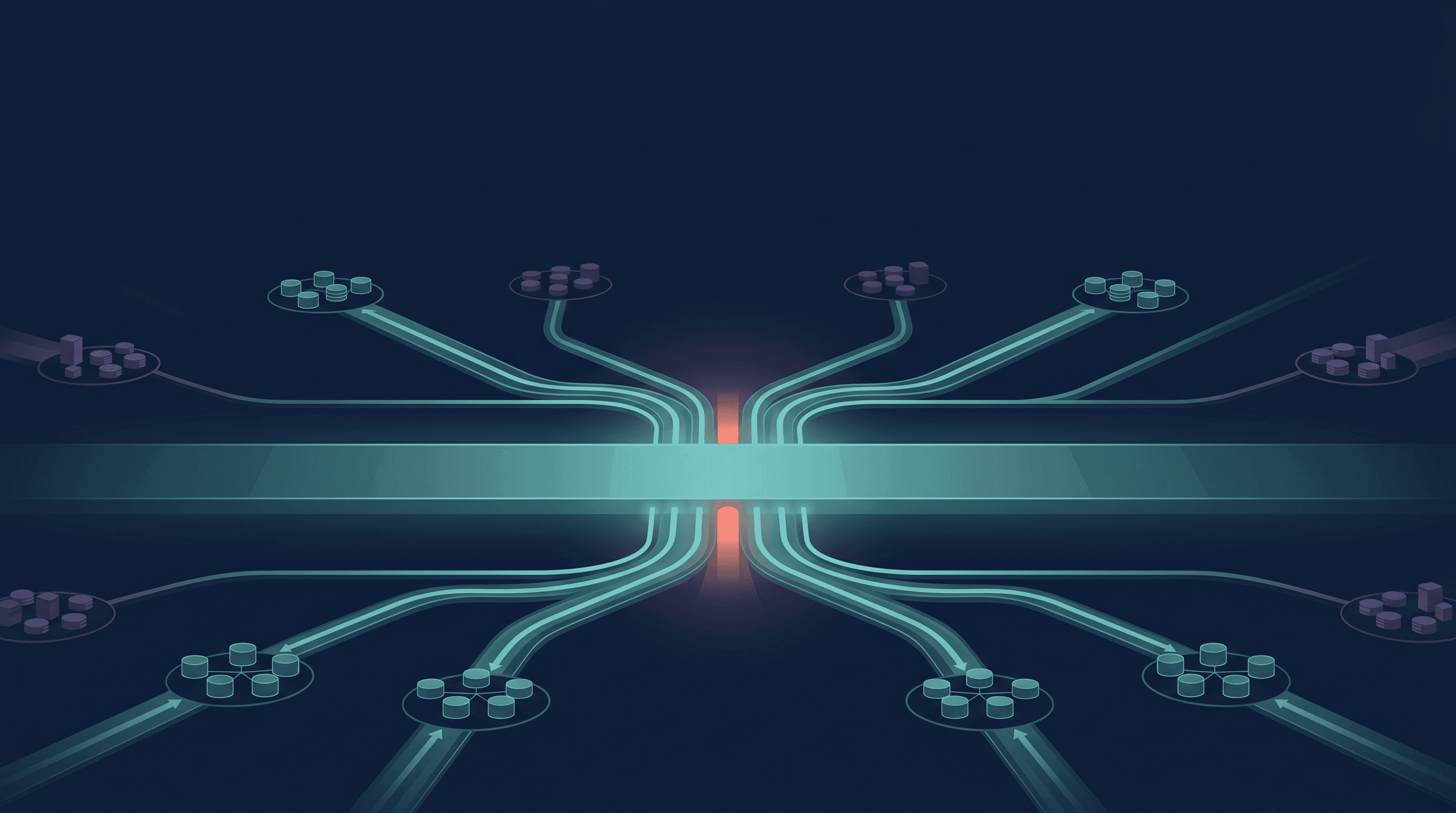

Most AI coding tools operate in a single pass. You send a prompt, the model predicts the next best tokens, and you get output. That works for short, self-contained tasks — rename this function, write a regex, explain this error. It breaks down the moment a task requires holding multiple constraints in mind simultaneously.

Think about what a real coding task actually involves. You're not just writing a function. You're writing a function that has to fit a specific schema, not conflict with an existing endpoint, handle three edge cases the product requires, and stay inside a rate limit you set two weeks ago. That's not one decision. That's eight decisions in sequence, where getting number three wrong invalidates numbers five through eight.

Standard single-pass models don't reason across those dependencies. They complete the immediate request and return output that looks correct but misses a constraint buried elsewhere in your system. You catch it four steps later, or you don't catch it until it breaks in production.

Claude Opus 4's extended thinking capability, as documented by Anthropic, gives the model a dedicated reasoning phase before it produces any output. It works through the problem the way a careful engineer would, flagging potential issues with its own plan before committing to code. For autonomous coding workflows, that internal deliberation is the difference between output you can hand off and output you have to audit line by line.

Why Does One-Shot Prompting Break Down on Real Coding Tasks?

Because one-shot prompting treats a multi-decision problem like a single-answer question.

Here's what that looks like in practice. A technical-adjacent founder needs to build an internal dashboard that pulls data from two APIs, formats it into a specific table structure, and runs on a weekly trigger. They open Claude and ask for the code. Claude returns something plausible. They test it. The API authentication logic doesn't match their actual credentials structure. They reprompt. Now the authentication works but the table format is wrong. They reprompt again. Now the format is right but the weekly trigger isn't configured for their environment.

By the time the thing actually runs, they've done nine separate prompts, re-explained context three times, and spent forty minutes they thought AI was supposed to save them.

The problem isn't that Claude is bad at code. The problem is that context collapsed between prompts. Each new message started from a partial understanding of the system. The model had no way to reason about how constraint A affected constraint F because it never held both at the same time.

This is where most small teams are operating — using Claude Opus 4 or even Sonnet in reactive, one-shot mode, then concluding that AI coding still requires a human at every decision point. That conclusion is accurate for the prompting strategy they're using. It's not accurate for what the model can actually do when you run it differently.

A single well-structured thinking-mode session, with the full context loaded at the start and extended reasoning enabled, can cover the same ground as those nine micro-prompts, with fewer errors, because the model is catching its own contradictions before they become your problem.

What Does "Autonomous" Actually Mean for a Small Team's Coding Workflow?

Autonomous doesn't mean unsupervised forever. It means you're not required at every decision point.

For a team of two to five people, the realistic goal is a workflow where you define the task clearly, hand it to a reasoning model with the right context, and come back to review output rather than generate it. That's a fundamentally different operating mode than prompting your way through a problem in real time.

The workflows this tends to work for: building internal automation scripts where the logic has five or more branching conditions, writing API integration code where schema matching matters, refactoring existing codebases where the model needs to hold the old structure and the new requirements simultaneously, debugging multi-file errors where the cause and the symptom are in different places. What these have in common is that they require genuine reasoning, not pattern matching. The model isn't retrieving a code template. It's working through a problem with real constraints, and extended thinking gives it space to do that before committing to an answer.

For teams weighing whether to keep paying for developer hours on tasks like these, the math shifts when you account for the full cost of one-shot prompting — the back-and-forth, the error correction, the context re-explanation. A focused thinking-mode session costs more per token than Sonnet. It often costs less per solved problem.

If you're building out the broader operating system this fits into, the article on building an AI operating system for a sub-10-person knowledge business covers how to structure the layers around it.

How Do You Know When to Use Thinking Mode vs. a Faster Model?

The decision isn't about task complexity in the abstract. It's about whether the task has dependencies.

A task has dependencies when getting one part wrong breaks another part — when the code needs to fit an existing system, handle multiple edge cases in a specific way, or make architectural decisions that affect what comes next. Those tasks belong in thinking mode, on Opus.

A task is self-contained when the output can be evaluated in isolation. Write a function that does X. Format this JSON. Explain what this error means. Those tasks work fine on Sonnet or Haiku. Running them on Opus with extended thinking is real money for no additional reliability.

The mistake most teams make: picking one model for everything and accepting whatever failure rate comes with using a faster model on reasoning-heavy tasks. The better approach is task routing — matching model and mode to what the task actually requires.

Sonnet handles the high-volume, low-dependency work. Opus with thinking mode handles the tasks where context collapse is the actual risk. Splitting the workload by task type, not by token budget, is where the cost efficiency actually comes from.

If you're trying to figure out whether this applies to what your team is actually building, that's exactly what a fit call is for.