How to Use Claude's Extended Thinking Mode for High-Stakes Business Decisions

You've been burned. You asked an AI a real question, got a confident answer, acted on it, and found out later it was wrong in a way that cost you time, money, or a client relationship. So now you use AI for drafts and summaries and nothing that actually matters.

That's a reasonable response to a real problem. But it's not the whole picture.

There's a mode in Claude most business owners don't know exists. It doesn't just answer faster or with more words. It reasons differently. And for the kind of decisions that keep you up at night, that difference is worth understanding.

What Is Claude's Extended Thinking Mode (And How Is It Different From a Normal Response)?

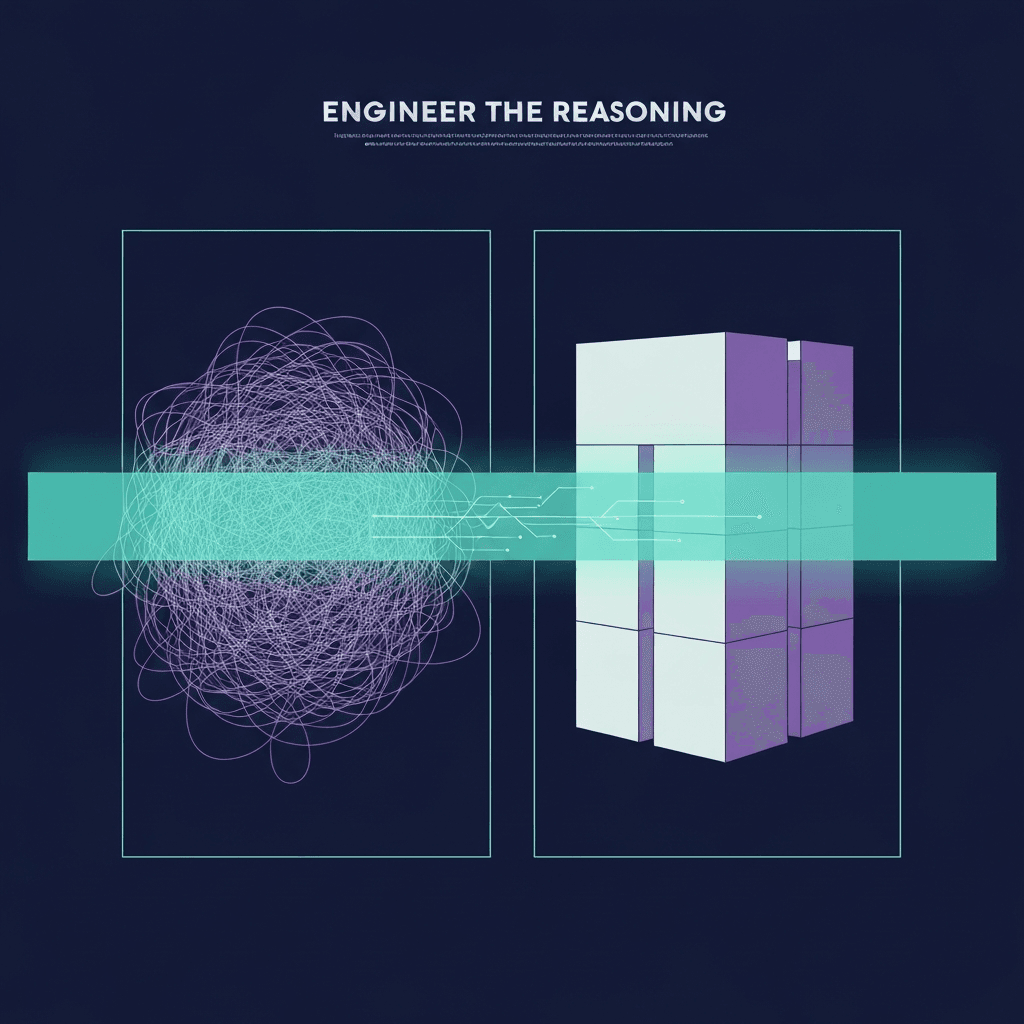

Standard Claude gives you an answer. Extended Thinking shows you, and more importantly runs through, the work it takes to get there.

Extended Thinking is an officially supported capability in Claude, available as a toggle in Claude.ai and a parameter in the API. It is not a colloquial term or a prompt trick. Anthropic ships it as a distinct mode for a specific reason: some problems require a different kind of reasoning, not just a longer response.

In standard mode, Claude processes your prompt and generates a response in a single forward pass. It's fast. It's often good. But it doesn't pause to question its own assumptions, explore competing interpretations, or catch itself going down the wrong path before it commits.

Extended Thinking mode works differently. Before Claude produces its visible response, it generates what Anthropic calls a "thinking" block, an internal scratchpad where the model works through the problem step by step. It considers multiple angles. It backtracks when something doesn't hold up. It reasons toward a conclusion rather than pattern-matching to the most probable next token.

The thinking block can run for seconds or, on harder problems, several minutes. You can see it in Claude.ai as a collapsed reasoning chain before the final answer. In API use, it's accessible as a separate content block.

Anthropic's benchmarks from the Claude 3.7 Sonnet release in February 2025 showed measurable accuracy gains on complex reasoning tasks compared to standard mode. That said, more reasoning steps don't universally improve results. On simple factual questions, Extended Thinking can overcomplicate. On ambiguous judgment calls, it reasons rigorously from whatever inputs it has, which means bad inputs still produce bad outputs, just with more elaborate justification. It can also reinforce a wrong assumption across multiple reasoning steps if that assumption appears early and nothing in your prompt contradicts it. The goal is not more thinking. It's the right kind of thinking on the right kind of problem.

When Should You Use Extended Thinking for Business Decisions?

Not always. That's actually the first thing to understand. Extended Thinking is slower and costs more tokens than a standard response. Using it to draft a follow-up email or summarize a meeting transcript is the wrong tool for the job.

Here's the filter I apply: if you'd normally take this problem to a trusted advisor, a CFO, or a senior consultant before acting, it belongs in Extended Thinking mode. If the cost of a shallow answer is higher than the cost of a slower one, use it.

Here are the situations where it consistently earns its cost:

Pricing strategy decisions. Should you raise retainer rates by 30 percent, move to project-based pricing, or introduce a tiered model? That's not a calculation. It's a judgment call with six variables and downstream consequences. Extended Thinking works through the tradeoffs in a way standard mode flattens.

Vendor or partner selection. Choosing between two SaaS platforms, two contractors, or two go-to-market partners when the criteria are competing and the stakes are real. Extended Thinking can hold the full constraint set without collapsing it into a recommendation that sounds right but ignores two of your actual requirements.

Client risk assessment. Before you take on a new client or renew a problem engagement, Extended Thinking can help you reason through red flags, capacity implications, and contract risk in a structured way.

Org and role restructuring. When you're deciding whether to hire, promote, outsource, or eliminate a function, the answer depends on timing, cash flow, team dynamics, and a dozen other factors that standard AI responses tend to oversimplify.

Go/no-go strategic calls. Entering a new market, retiring a service line, or pivoting an offer. These decisions are too expensive to get wrong and too complex for a single-pass answer.

What ties these together: they all involve competing constraints, incomplete information, and consequences that compound. Standard mode handles each variable. Extended Thinking holds them in relation to each other.

How to Structure a High-Stakes Decision Prompt

The mode itself doesn't make a bad prompt good. Extended Thinking amplifies whatever quality of input you give it. A vague question still gets a vague answer, just with more sophisticated-sounding reasoning behind it. Here's how to give it something worth reasoning about.

1. State the actual decision, not the topic.

Weak: "Help me think about my pricing."

Strong: "I need to decide whether to raise my monthly retainer from $4,500 to $6,000 for existing clients starting Q3, or hold pricing and raise only for new clients. I need a recommendation with reasoning."

The difference matters more in Extended Thinking than standard mode because the model's reasoning chain amplifies the specificity of your framing. Vague input produces elaborately vague output.

2. Give it the real constraints.

What's your cash position? What's your client retention risk? What did last year's renewal cycle look like? Extended Thinking can only reason with what you give it. Treat it like a senior advisor, not a search engine. Give it the context you'd give a CFO before asking for a recommendation.

3. Name the tradeoffs you already see.

If you already know that Option A protects revenue and Option B protects relationships, say that. Extended Thinking will interrogate those assumptions rather than just validate them. This is where it catches things you missed.

When I ran this exact prompt structure on a pricing decision, evaluating whether to raise rates for existing clients at renewal, Extended Thinking surfaced a dependency I'd overlooked: three of my six clients had auto-renewal clauses that would lock in the current rate for another year regardless of what I decided. Standard mode gave me a pros and cons list I already knew. Extended Thinking worked through the downstream logic of each option and caught the contractual constraint before I committed to a timeline.

4. Ask for a recommendation, not a list.

Lists are a hedge. A recommendation with stated assumptions is a reasoning output you can actually pressure-test. Ask Claude to commit to a position and explain what would have to be true for it to be wrong.

5. Enable Extended Thinking explicitly.

In Claude.ai: look for the "Extended thinking" toggle before you send the prompt. In the API: set thinking: { type: "enabled", budget_tokens: [number] } in your request. It won't activate automatically on complex prompts. You have to turn it on.

Where It Fails (And When to Put It Down)

Extended Thinking makes Claude's reasoning more rigorous. It does not make Claude omniscient.

The most common failure mode I've seen: you give it a decision about a domain you know well, leave out a key piece of context, and it produces a thorough analysis of the wrong problem. The reasoning looks sound. The conclusion is confidently stated. And it's wrong because the input was incomplete. Extended Thinking doesn't know what you didn't tell it.

It also has a specific failure pattern on highly ambiguous judgment calls, especially ones involving relationships, reputation, or long-term trust. A seasoned human will sometimes override a well-reasoned recommendation based on pattern recognition from years in a specific niche. Extended Thinking doesn't have that. It reasons from the information you gave it, not from lived experience.

And it genuinely wastes time on simple decisions. Quick operational questions, standard follow-up emails, anything with a clear right answer, these don't need it. Using Extended Thinking everywhere is like asking a CFO to review every invoice. The tool is right. The application is wrong.

How I Use Extended Thinking Inside Business AI Systems

When I build Business AI Operating Systems for service firm founders, Extended Thinking isn't something I drop in everywhere. I use it at specific decision nodes, places in a workflow where the output carries real downstream consequence.

Client escalation routing. Pricing logic for custom scopes. Risk scoring on new business opportunities. These are the points where a shallow answer compounds into a bad outcome three steps later, and where the extra latency is worth it because speed isn't the constraint.

The rest of the system, intake flows, summaries, drafts, status updates, runs on standard mode. Matching the tool to the task isn't about using the most powerful option everywhere. It's about knowing which moments actually require it. That's the same judgment call you make when you decide whether to handle something yourself or loop in your most expensive advisor.

If you want to understand how this fits into a broader AI system for your business, How to Build an AI Operating System for a Sub-10 Person Knowledge Business covers the architecture.

If you're making decisions complex enough to keep you in the building longer than you want to be, and you're not using Extended Thinking for those calls, you're leaving a real capability on the table.

If you want to talk through where this fits in your specific decision workflow, book a discovery call. No pitch. Just an honest look at where your current process has gaps and whether there's a better tool for the moments that actually matter.